Deep learning models have revolutionized various domains, such as computer vision, natural language processing, and speech recognition. However, as these models become increasingly complex and opaque, the interpretation of their decision-making processes has become crucial for building trust and ensuring reliability. Among the various interpretation methods, visualizing feature maps or learned weights is the most intuitive and convincing approach for users to understand the reasoning behind the model's predictions. In convolutional neural networks (CNNs), which have become the primary choice for feature extraction in computer vision, gradient-based interpretation (Simonyan and Zisserman, 2014), region-based visualization (Wang et al., 2020b), and Class Activation Mapping (CAM) (Zhou et al., 2016) are the most widely used methods for explaining convolutional operations.

Gradient-based approaches, such as Simonyan and Zisserman (2014), Adebayo et al. (2018), Omeiza et al. (2019), Springenberg et al. (2014), Sundararajan et al. (2017), and Zeiler and Fergus (2014), backpropagate the gradient of the target class to the input layer, highlighting image regions that significantly impact the prediction. However, these methods often generate noisy and incomplete activation maps, focusing primarily on edge or texture features while neglecting fine-grained information. Moreover, the gradients of CNNs may vanish or explode due to the saturation problem in the activation functions, such as Sigmoid or ReLU (Zhang et al., 2021b), further compromising the quality of the activation maps.

CAM (Zhou et al., 2016) and its extensions, such as GradCAM (Selvaraju et al., 2017) and GradCAM++ (Chattopadhay et al., 2018), provide visual explanations by linearly combining weighted activation maps from convolutional layers. Despite their effectiveness, these methods have limitations: CAM is architecture-sensitive and requires modifying the network structure, while GradCAM and GradCAM++ may activate irrelevant parts, such as the background, due to gradient noise. Furthermore, these methods may generate incomplete activation maps that fail to capture the entire object of interest, as they rely on the gradients of the target class, which may not cover all the discriminative regions.

Region-based methods, such as ScoreCAM (Wang et al., 2020b) and GroupCAM (Zhang et al., 2021a), calculate the importance of activation maps using the category confidence of corresponding input features rather than local region gradients. Although these methods can effectively remove background areas, they may generate incomplete activation maps and have high computational costs. Moreover, these methods do not fully exploit the information from the gradients, which can provide valuable insights into the model's decision-making process.

To address these limitations and provide a more accurate and comprehensive visual interpretation of deep CNNs, we propose UnionCAM, a novel method that employs a “denoising-union-selection” strategy to generate class activation maps. The main contributions of this paper are as follows:

• To effectively remove background noise from gradient-based activation maps and mitigate challenges such as gradient noise and vanishing gradients, we introduce the Activation Map Denoising (AMD) module. It applies a denoising function to the gradients, which enables the AMD module to better capture discriminative regions by generating more accurate and reliable activation maps.

• We propose the Activation Map Union (AMU) module, combining the denoised activation maps from AMD with region-based activation maps, to integrate the advantages of gradient-based and region-based methods. AMU generates more complete and informative activation maps by capturing both fine-grained details and global context, offering a more comprehensive understanding of the model's decision-making process.

• To select the most informative activation map from the union set generated by AMU, We further develop the Activation Map Selection (AMS) module. AMS employs a novel scoring function that considers both the discriminative power and the spatial consistency of the activation maps, ensuring that the selected map provides the most accurate and reliable visual interpretation. This module further enhances the interpretability and trustworthiness of the generated explanations.

• Through extensive experiments on various benchmarks, we demonstrate that UnionCAM achieves state-of-the-art performance in visual interpretation, outperforming existing methods in terms of both accuracy and completeness. UnionCAM effectively addresses the problems of incomplete activation and background activation, providing a more trustworthy and interpretable visualization of deep CNNs. The superior performance of UnionCAM highlights its potential for facilitating the understanding and debugging of deep learning models in real-world applications.

2 Related workFeature or weight visualization enhances model transparency and understanding by illustrating how decisions are made. It aids in understanding the human brain, facilitates early diagnosis of conditions, improves the accuracy of prediction systems, and helps detect potential failures, among other benefits (Zong et al., 2024; Yu et al., 2022). CAM (Zhou et al., 2016) is one of the pioneering works that uses a weighted sum of the feature maps from the last convolutional layer to generate class-specific activation maps, which has inspired numerous subsequent developments in the field. In this paper, we reviewed recent relevant works and categorized them into three types: gradient-based, gradient-free, and ensemble methods. Additionally, some feature visualization methods, such as GAN-based approaches, can also provide valuable methods for understanding and interpreting model behavior.

2.1 Gradient-based methodsGradient-based methods utilize the gradients of the model's output with respect to the input or intermediate feature maps to highlight the important regions. Grad-CAM (Selvaraju et al., 2017) generalizes CAM to models without global average pooling by using the gradients of the target class score with respect to the feature maps. Expanding on this work, a range of gradient-based methods have been developed to enhance granularity using various approaches, such as GradCAM++ (Chattopadhay et al., 2018), Smooth GradCAM++ (Omeiza et al., 2019), XGradCAM (Fu et al., 2020), Augmented GradCAM (Morbidelli et al., 2020), Integrated GradCAM (Sattarzadeh et al., 2021), and among others. LayerCAM (Jiang et al., 2021) enhances the reliability of CAMs by incorporating information from various layers through weighted aggregation, offering a more detailed coarse-to-fine aggregation solution. Despite their computational efficiency, gradient-based methods may capture irrelevant information in the activation maps since the feature maps are not always related to the target class (Zhang et al., 2021b).

2.2 Gradient-free methodsGradient-free CAMs, on the other hand, aim to identify the importance of different input regions by occluding or perturbing them and observing the effect on the model's output (Zhang et al., 2021b; Selvaraju et al., 2017; Kapishnikov et al., 2019; Zhang et al., 2018; Liu et al., 2021b; Yan et al., 2021; Ahn et al., 2019; Liu et al., 2021a; Liang et al., 2022; Li et al., 2021; Cui et al., 2021; Ranjan et al., 2019; Lu et al., 2023; Jiao et al., 2018). One of the earliest works, RISE (Petsiuk et al., 2018), generates random binary masks to occlude different parts of the input image for prediction scores, and then uses a linear combination of these masks and corresponding scores to obtain the final importance map. Although effective, it is inefficient due to the need for thousands of random masks. ScoreCAM (Wang et al., 2020b) improves upon RISE by using the activation maps as the initial masks and combining them with the model's output scores to generate more accurate activation maps, spearheading the advancement of methods such as Smooth ScoreCAM (Wang et al., 2020a), Integrated ScoreCAM (Naidu et al., 2020), FIMF ScoreCAM (Li et al., 2023), GroupCAM (Zhang et al., 2021a), and etc. Differently, AblationCAM (Ramaswamy et al., 2020) utilizes the effective slope which is characterized as the difference between the original prediction score and the prediction score derived from an ablated activation map; based on this work, AblationCAM++ (Salama et al., 2022) further introduce clustering to group activation maps for improved efficiency. ReciproCAM (Byun and Lee, 2022) significantly accelerates execution speed by using the reciprocal relationship between activation maps and predictions, further inspiring the development of ViT-ReciproCAM (Byun and Lee, 2023) for Vision Transformers (ViT). Although Gradient-Free CAMs generally produce more human-interpretable explanations, they may generate incomplete activation maps due to the presence of salient regions that are not necessarily related to the target class.

2.3 Ensemble methodsTo address the limitations of gradient-based and gradient-free methods, certain approaches FDCAM (Li et al., 2022) combine gradient-based and score-based weights to derive CAM's weightings, harnessing the strengths of both techniques. Feature CAM (Clement et al., 2024) combines perturbation and activation solutions for fine-grained, class-discriminative visualizations. Grad++-ScoreCAM (Soomro et al., 2024) enhances CNN interpretability and localization by first generating a coarse heatmap with GradCAM++ and then refining it with ScoreCAM to incorporate intermediate layer information. Our proposed method UnionCAM also falls in this part, by denoising the gradient-based activation maps and then merging them with the region-based maps using a learned weight, UnionCAM generates more accurate and complete visual explanations. In the following sections, we will describe the proposed method in detail and demonstrate its effectiveness through comprehensive experiments.

2.4 Feature visualization via generation methodsMethods based on generative models also play an important role in feature visualization. GAN functions as an insightful method that clarifies the decision-making process and offers effective support for diverse tasks (Bau et al., 2018; Yu et al., 2022; Lang et al., 2021). Bau et al. (2018) introduce an analytical framework for visualizing and understanding GANs at the levels of units, objects, and scenes. Lang et al. (2021) train a generative model to clarify the various attributes that contribute to classifier decisions. Yu et al. (2022) propose the multidirectional perception generative adversarial network (MP-GAN) to visualize morphological features for whole-brain MR images. Besides, diffusion model-based feature visualization methods provide visualization strategies from a different perspective. VPD (Zhao et al., 2023) proposes to refine text features and prompt the denoising decoder for better interaction between visuals and text, using cross-attention maps for guidance. NeuroDM (Qian et al., 2024) first extracts the visual-related features with high classification accuracy from EEG signals by EV-Transformer, and then employs EG-DM to synthesize high-quality images with the EEG visual-related features.

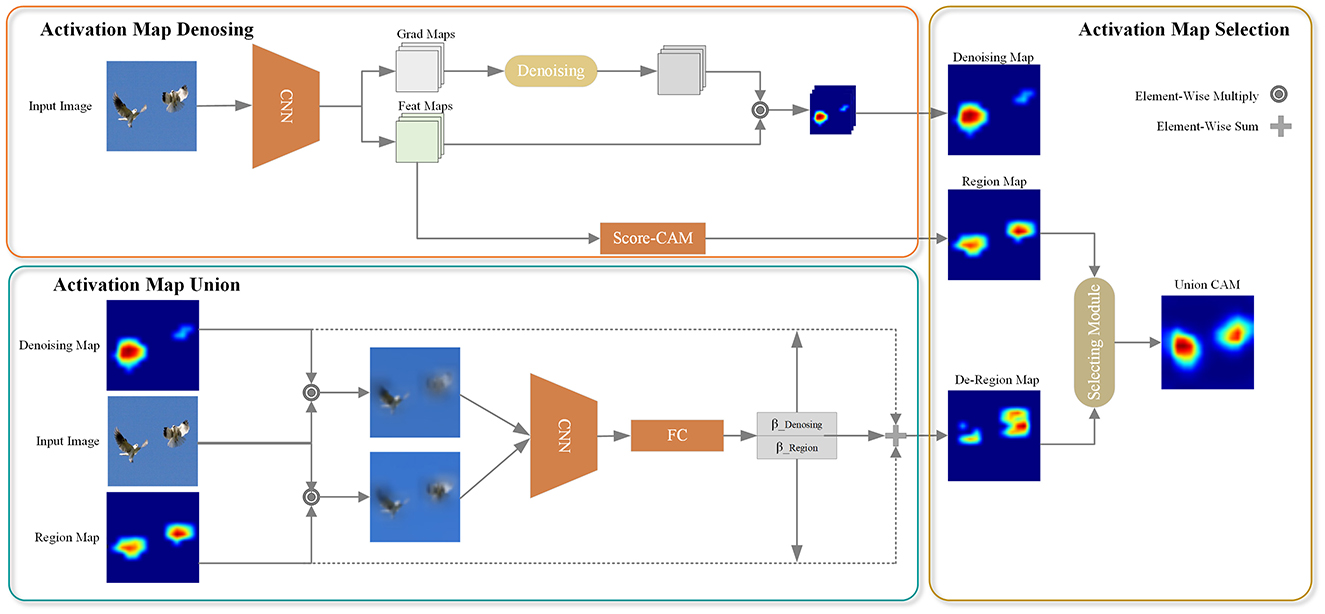

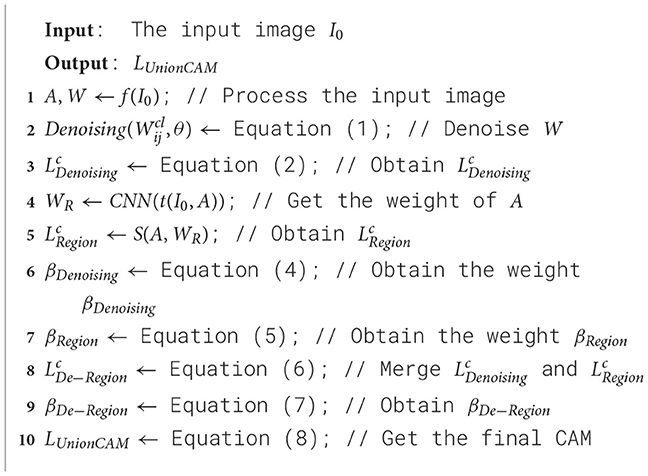

3 MethodologyThe overall architecture of the proposed UnionCAM is illustrated in Figure 1, and we also present the pseudocode in Algorithm 1. This section provides a detailed explanation of the three key modules in the proposed method: Activation Map Denoising (AMD), Activation Map Union (AMU), and Activation Map Selection (AMS). Let I0∈ℝ3×M×N be an input image, where M and N represent the height and width of the image, respectively. Let Ib∈ℝ3×M×N be a black image with the same dimensions as I0. We denote f(·) as a deep neural network which predicts a score yc=fc(I0)∈ℝ for class c given an input image I0.

Figure 1. Pipeline of UnionCAM. AMD module is used to denoise meaningless background to generate a purer CAMs. Then, AMU module is to generate a complete CAM, which takes the class confidence as the weight to union the denoised CAMs and the region-based CAMs. AMS module is used to select a better interpretation effect of CAMs. ⊙ denotes element-wise multiple operation, which used for weight and feature maps. ⊕ represents add operation.

Algorithm 1. UnionCAM.

3.1 Activation map denoisingAfter the feature extraction backbone network, the feature map and the corresponding reverse gradient of each channel can be obtained, as shown in the “Feat Maps” and “Grad Maps” in Figure 1. However, the gradients of CNNs may be noisy and even tend to disappear due to the saturation problem of the zero gradient region of the “Sigmoid” or “ReLU” function (Zhang et al., 2021b). To address this issue, we propose an activation map denoising (AMD) method, as illustrated in the “Activation Map Denoising” part in Figure 1. This subsection will elaborate on this module. The AMD module mainly designs a function to denoise the gradient obtained after the backbone network. For the convenience of explanation, the gradient is denoted as W here.

For each channel of W, the θ percentile is calculated as the denoising threshold. If the gradient value is greater than or equal to the threshold, the gradient value at the corresponding position remains unchanged; otherwise, the gradient value at the corresponding position is set to 0. This denoising operation is reasonable because positions with relatively small gradient values have a high probability of being background areas unrelated to the detection target. In this way, we can remove detect target-independent background regions, thereby improving the localization effect of class activation maps on detected targets.

In addition to an illustration of the denoising process in Figure 1, we formulate the denoising function in this section. For a scalar Wij in W, the denoising function can be formulated as:

Denoising(Wijcl,θ)={Wijcl,Wijcl≥p(Wcl,θ);0,otherwise, (1)where p(Wcl, θ) calculates the θ percentile of the l-th layer Wcl for specific category c. With denoised weighting maps, the class related feature maps are defined as the weighted sum to obtain the class activation map, which can be formulated as,

LDenoisingc=∑lαcl○ReLU(Wcl)○Al, (2)where ° is Hadamard product, Al is the feature map of the l-th layer. The weight α is the pixel-level average coefficient, which is defined as:

αijcl={1∑m,n(WmnclI(Wmncl))if Wijcl>0;0otherwise. (3)where ?(·) is an indicator function checking whether the given variable is >0, and Wijcl is the gradient value corresponding to the (i, j) position in the denoised gradient W of the l-th channel. The locations where the gradient values are >0 are most likely the locations of the target. The use of pixel-level average coefficients can avoid excessive channel weights in small activation areas, which will lead to significant activation problems. After the above process, the gradient-based class activation map after denoising can be obtained, which is denoted as LDenoisingc. A high-quality LDenoisingc serves as the basis for the upcoming soft and hard integration strategy, ensuring that the model can effectively leverage refined features.

3.2 Activation map unionGradient-based CAM introduces noise due to the gradient. Although the denoising method in Section 3.1 can remove part of the noise, it cannot completely eliminate the background area unrelated to the target class. To further suppress the background area, we draw inspiration from the area-based method. In our approach, the feature map of each channel is used as a mask to activate the corresponding area in the original image. The activated area is then used as the input to the CNN, and the prediction score is used as the weight of the feature map. The weighted summation of these feature maps yields the class activation maps, denoted as LRegionc.

By using LRegionc, the influence of the gradient on the class activation map is significantly reduced, and the background area can be effectively suppressed. However, for targets with distinctive features, the main part of the target may also be partially removed, leading to an incomplete class activation map. To address this issue and obtain a more complete representation of the main object while further suppressing the background, we propose a method to combine LDenoisingc and LRegionc. The two class activation maps are merged using weights βDenoising and βRegion for LDenoisingc and LRegionc, respectively. The overall process is illustrated in the “Activation Map Union” block of Figure 1. In the following, we formulate this module in detail.

To combine the two types of activation maps using weights, we first need to determine their respective weights. The weight βDenoising is formulated as:

βDenoising=fc(LDenoisingc○I0)-fc(Ib), (4)Here, we perform the ° operation on the denoised CAM LDenoisingc and the original image, which means that LDenoisingc is used as the mask to activate the corresponding part of the original image.

fc(LDenoisingc○I0) denotes the activation image generated by using LDenoisingc as the mask and inputting it into the convolutional neural network for the corresponding target category c, and fc(Ib) represents the score corresponding to the target category c obtained by inputting the all-black image Ib into the convolutional neural network. Therefore, βDenoising can be understood as the contribution of the LDenoisingc activation area to the score of the target category c. Similarly, βRegion can be understood as the contribution of the LRegionc activation region to the target category c, which can be formulated as:

βRegion=fc(LRegionc○I0)-fc(Ib), (5)where fc(LRegionc○I0) denotes the score of the target category c obtained by inputting the activation image generated using LRegionc as the mask into the convolutional neural network, and fc(Ib) represents the score of the target category c obtained by inputting the all-black image Ib into the convolutional neural network.

Having obtained the score contributions βDenoising and βRegion of the LDenoisingc and LRegionc activation regions to the target category c, respectively, we can merge the two types of activation maps using these contributions as weights:

LDe-Regionc=βDenoising·LDenoisingc+βRegion·LRegionc. (6)By combining the two activation maps weighted by their respective contributions to the target category score, the resulting class activation map emphasizes the target object's main area (high-scoring part) in the original image while suppressing the background area (low-scoring part). This soft integration strategy enables the model to adaptively acquire meaningful features while enhancing its ability to understand and process complex data patterns. This approach helps to obtain a more complete representation of the target object while effectively reducing background activation, thereby improving the interpretability and localization accuracy of the class activation map.

3.3 Activation map selectThe combination of the two activation maps using their respective scores as weights, as described in Section 3.2, does not always guarantee an improved explanatory power of the resulting activation map. One potential scenario is when the background area outside the target object in LDenoisingc is not entirely suppressed, and the weight βDenoising obtained from the CNN is greater than βRegion. In this case, merging the two activation maps with the scores as weights may introduce redundant background components, which can negatively impact the final interpretation and localization accuracy of the class activation map.

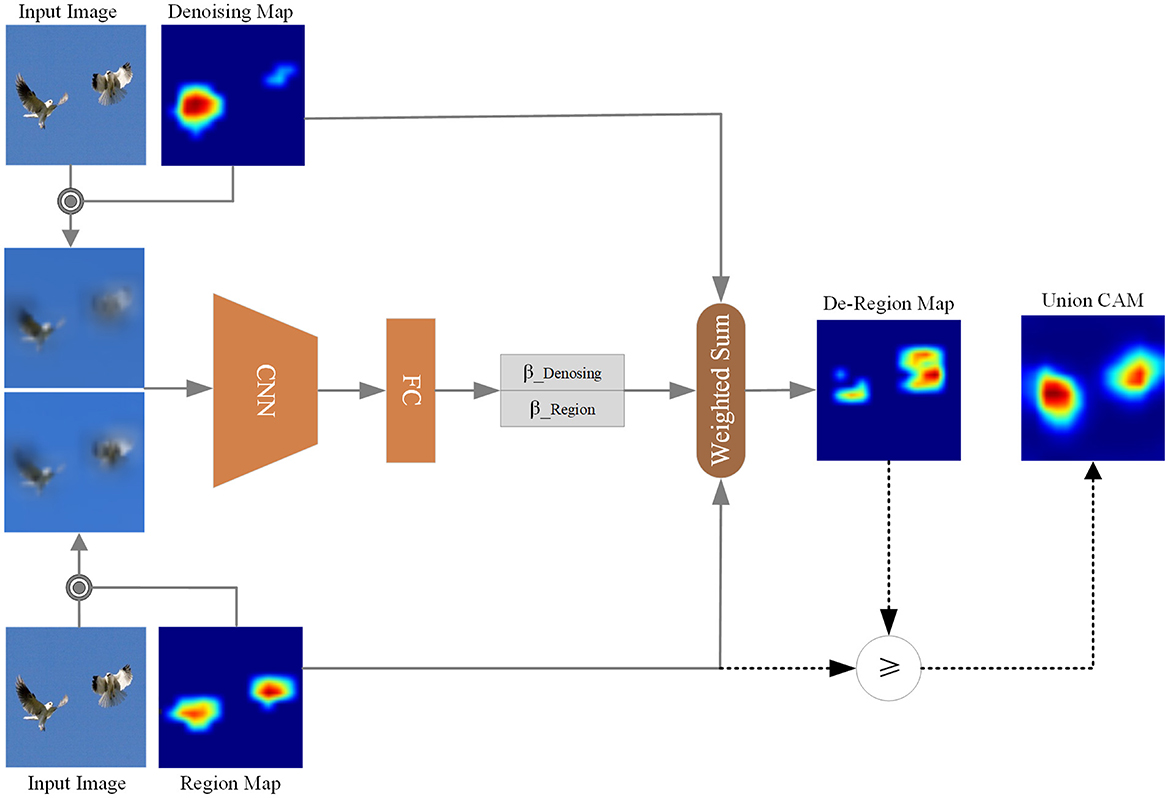

To mitigate the above issue, we propose the Activation Map Selection (AMS) method. Considering both LDe-Regionc and LRegionc, AMS can choose the class activation map that provides a more interpretable representation of the target category. This capability enables AMS to select the CAM that yields a higher score for the target category, indicating better localization and interpretation of the target object. The overall workflow of the AMS method is illustrated in Figure 2.

Figure 2. Activation map select. Based on the comparison between βDe−Region and βRegion, we select the corresponding element from De-Region Map and Region Map to form the Union CAM.

We subsequently formulate AMS, based on the score contribution βRegion of the LRegionc activation region to the target category c has been obtained from Equation 5 and the combined class activation map LDe-Regionc is also obtained from Equation 6. To select the CAMs according to the interpretability of the target category, we must first get the score contribution βDe−Region of the LDe-Regionc activation region to the target category c. Similarly, wDe−Region can be formulated as:

βDe-Region=fc(LDe-Regionc○I0)-fc(Ib) (7)After obtaining the score contribution βDe−Region of the LDe-Regionc activation region to the target category c, we can select the final CAM result according to the bigness of βDe−Region and βRegion and its decision-making process can be formulated as:

LUnionCAMc={LDe−Regioncif βDe−Region>βRegion;LRegioncotherwise. (8)As a combination of soft and hard selection strategy, AMS enables a more flexible dynamic integration of both gradient-based activation maps and region-based activation maps, dynamically adapting to different input characteristics. The βDenosing and βRegion first softly select the denoising map and region map for integration, which sometimes can introduce noise signals, thus blurring the decision-making process. Compensatorily, Equation 8 offers a hard selection to alleviate this issue, promoting the model to make more reliable decisions, which enhances this dynamic adaptability by more effectively capturing activation regions that are beneficial to the decision-making process.

4 ExperimentsIn this section, we conduct experiments to evaluate the effectiveness of the proposed interpretation method. First, we provide a basic description of the datasets and data preprocessing for the experiments in Section 4.1. Second, in Section 4.2, we quantitatively evaluate UnionCAM against other mainstream class activation map methods using established evaluation metrics. Then, we qualitatively evaluate our method with visualizations on the ILSVRC2012 (Russakovsky et al., 2015) in Section 4.3. Finally, in Section 4.4, we assess the effectiveness of each module proposed in this paper through ablation experiments.

4.1 Experimental setupExperiments are performed on commonly used computer vision datasets, including the validation set of ILSVRC2012 (Russakovsky et al., 2015) and the VOC2007 test set (Everingham et al., 2015), as shown in Figure 3. For both datasets, all images were resized to 3 × 224 × 224, then converted to tensors, and normalized to the range [0,1]. No additional preprocessing was applied. We utilize the pretrained torchvision model VGG16 (Simonyan and Zisserman, 2014) as the base classifier model. Unless stated otherwise, the θ parameter in UnionCAM is set to 10. To ensure a fair comparison, all activation maps are upsampled to 224 × 224 by using bilinear interpolation.

Figure 3. Examples from the ILSVRC2012 and VOC2007 datasets.

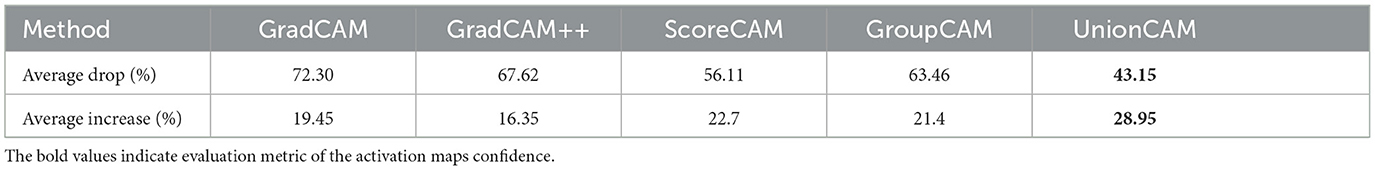

4.2 Quantitative evaluation of evaluation indicatorsWe initially evaluate the confidence of the activation maps generated by UnionCAM for the object recognition task employed in Chattopadhay et al. (2018). The original input activates specified regions in the given image through point-wise multiplication with activation maps to observe score changes in the target class. We adopt the metric from Chattopadhay et al. (2018), where the average drop is formulated as: ∑i=1Nmax(0,yic-oic)yic×100, and the average increase is formulated as: ∑i=1NSign(yic<oic)N×100. Here, yic denotes the score of category c predicted after inputting the original image into the network, and oic denotes the score predicted after the activation map activates certain parts of the original image. Sign is an indicator function that returns 1 if the input condition is true. Experiments are performed on the ImageNet (ILSVRC2012) validation set with 2,000 images randomly selected. Our algorithm consumes 2.22 GB of memory during operation, and the average processing time per image is 1.16 s, which is evaluated on an NVIDIA RTX A6000 GPU. The results are summarized in Table 1. Similarly, the experimental results on the VOC2007 test set are shown in Table 2.

Table 1. Recognition evaluation results on the ILSVRC2012 dataset (the smaller the average drop, the better, and the larger the average increase, the better).

留言 (0)